GenAI with LLMs (5) Reinforcement learning from human feedback

This post covers reinforcement learning from human feedback (RLHF) from the Generative AI With LLMs course offered by DeepLearning.AI.

Why we need RLHF

RLHF helps to avoid

- Toxic language

- Aggressive responses

- Providing dangerous information

One potentially exciting application of RLHF is the personalization of LLMs, where models learn the preference of each individual user through a continuous feedback process. Such as individualized learning plans and personalized AI assistant.

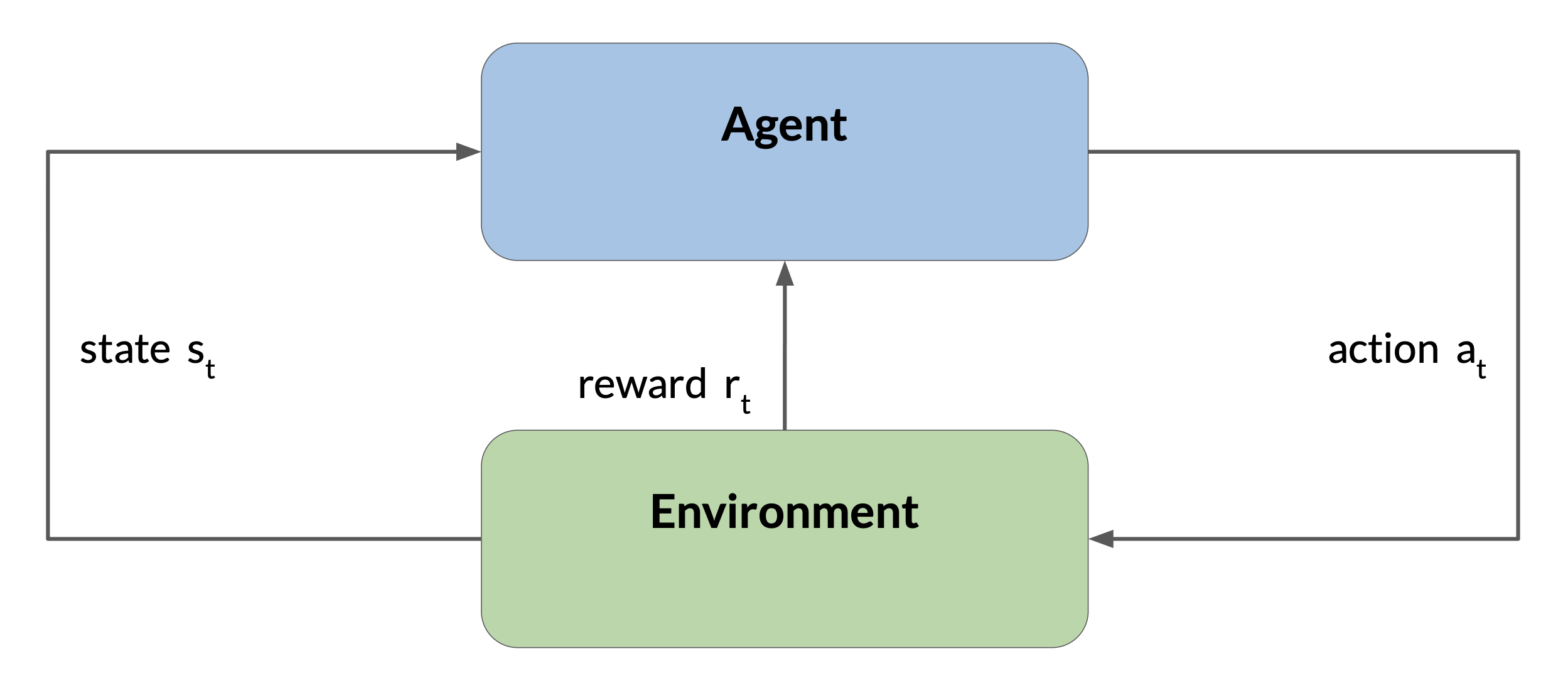

Reinforcement learning

Reinforcement learning is a type of machine learning where an agent learns to make decisions related to a specific goal by taking actions in an environment, with the objective of maximizing some notion of a cumulative reward. In this framework, the agent continually learns from its experiences by taking actions, observing the resulting changes in the environment, and receiving rewards or penalties, based on the outcomes of its actions. By iterating through this process, the agent gradually refines its strategy or policy to make better decisions and increase its chances of success.

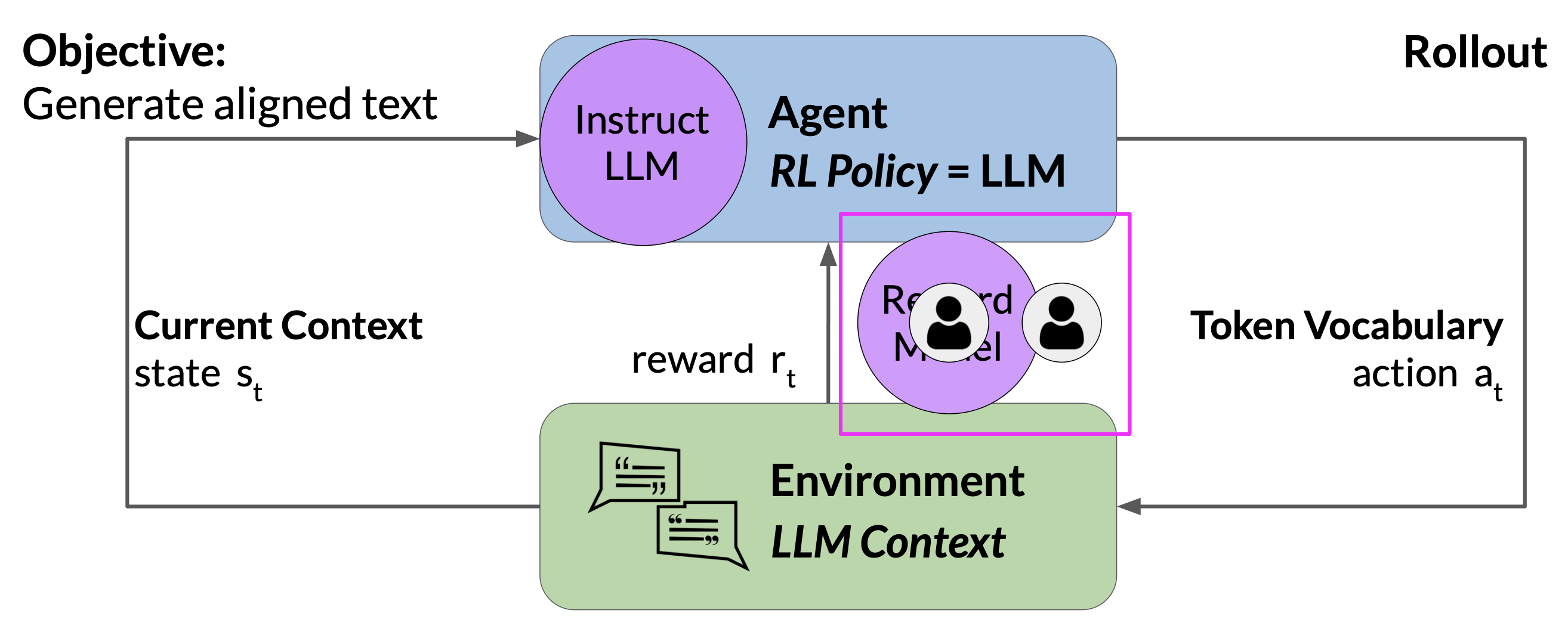

How to determine the reward when fine-tuning LLM with reinforcement learning?

- One way is to have a human evaluate all of the completions of the model against some alignment metric, such as determining whether the generated text is toxic or non-toxic. This feedback can be represented as a scalar value, either 0 or 1. The LLM weights are then updated iteratively, to maximize the reward obtained from the human classifier, enabling the model to generate non-toxic completions.

- A scalable and practical alternative is the reward model:

- Start with a smaller number of human examples to train the reward model

- Once trained, use the reward model to assess the output of the LLM and assign a reward value, which in turn gets used to update the weights of the LLM and train a new human aligned version.

- Exactly how the weights get updated as the model completions are assessed depends on the algorithm used to optimize the policy.

RLHF: preparation

The first step is to select a model to work with and prepare a dataset for human feedback.

The model should have some capability to carry out the task of interest, whether it is text summarization, question answering, or something else. In general, recommend to start with an instruct model that has already been fine-tuned across many tasks and has some general capabilities.

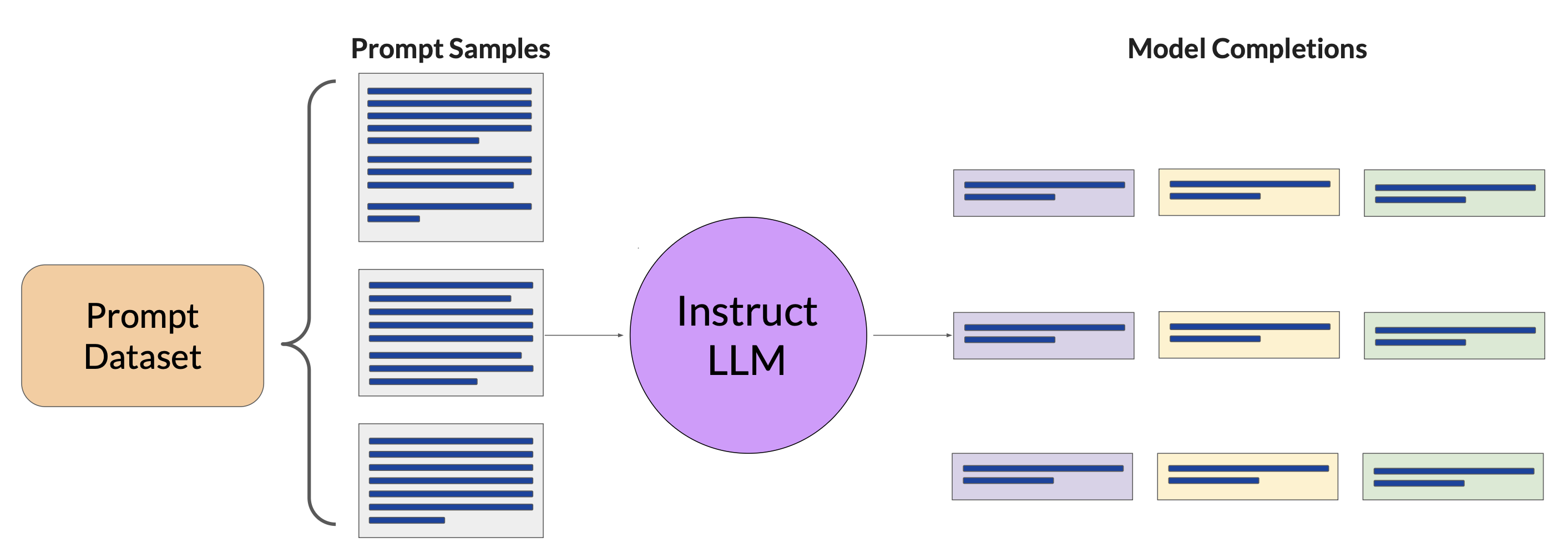

Prepare dataset for human feedback

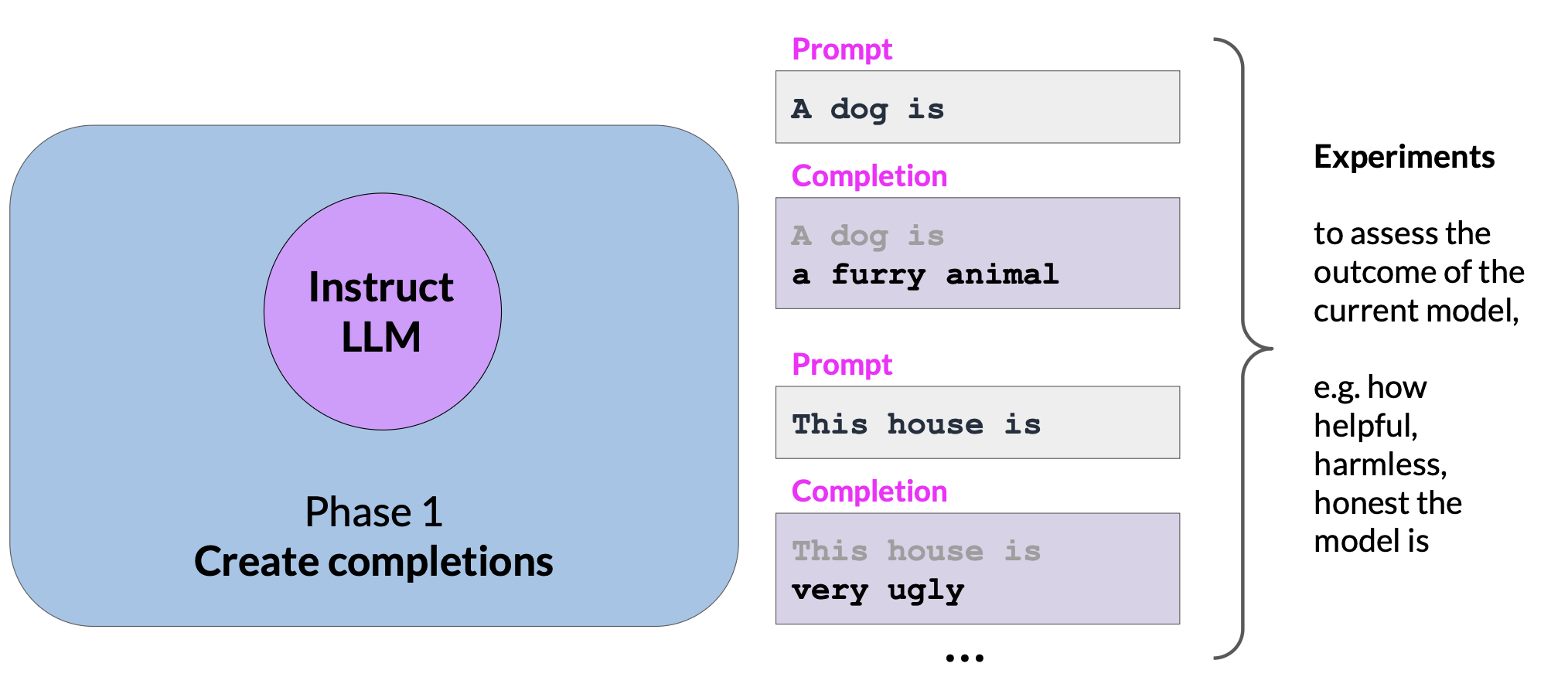

Use the selected instruct LLM with a prompt dataset to generate a number of different responses for each prompt. The prompt dataset is composed of multiple prompts, each of which gets processed by the LLM to produce a set of completions.

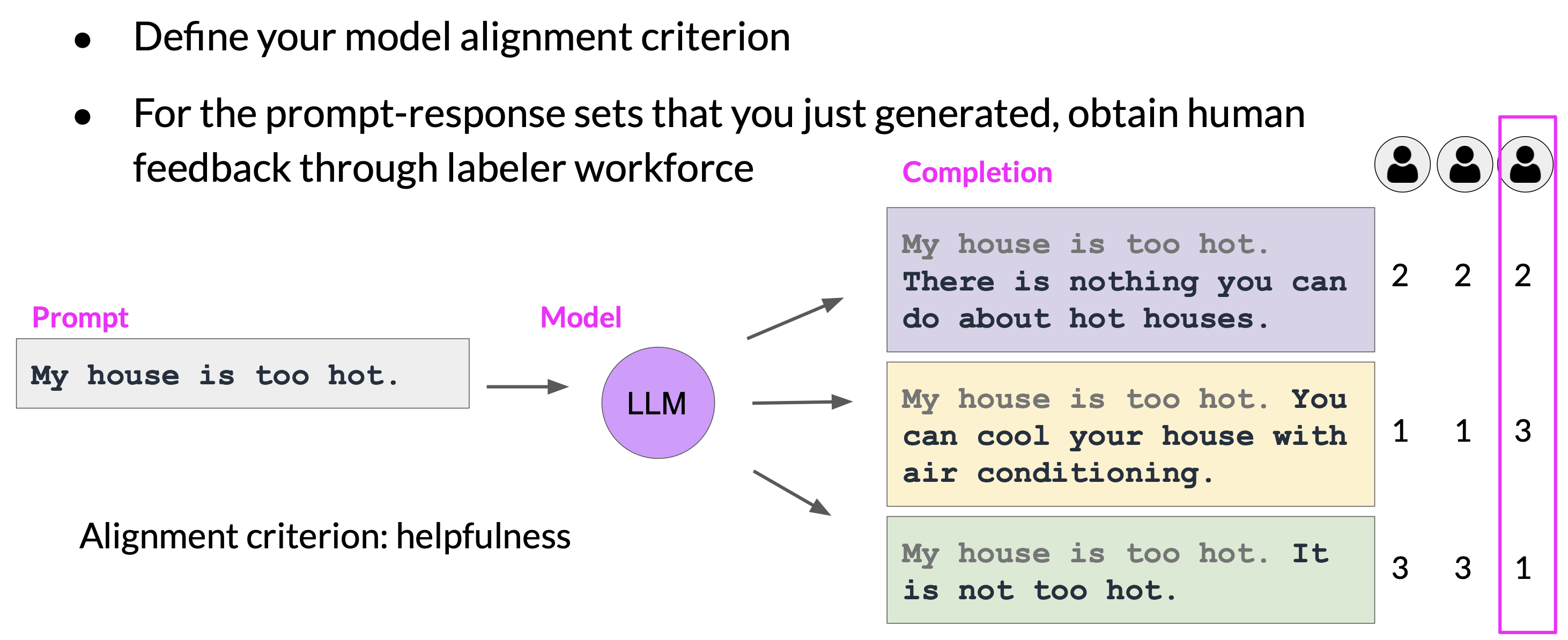

Collect human feedback

The next step is to collect feedback from human labelers on the completions generated by the LLM. This is the human feedback portion of the RLHF.

- Decide the criteria for the labelers to assess the completions on. This could be helpfulness, toxicity, etc.

- Ask the labelers to assess each completion in the dataset based on the criteria.

- Assign the same task to multiple labelers to dampen the impact of “poor” labelers who may misunderstood the instruction.

Note that the clarify of instructions can make a big difference on the quality of the human feedback.

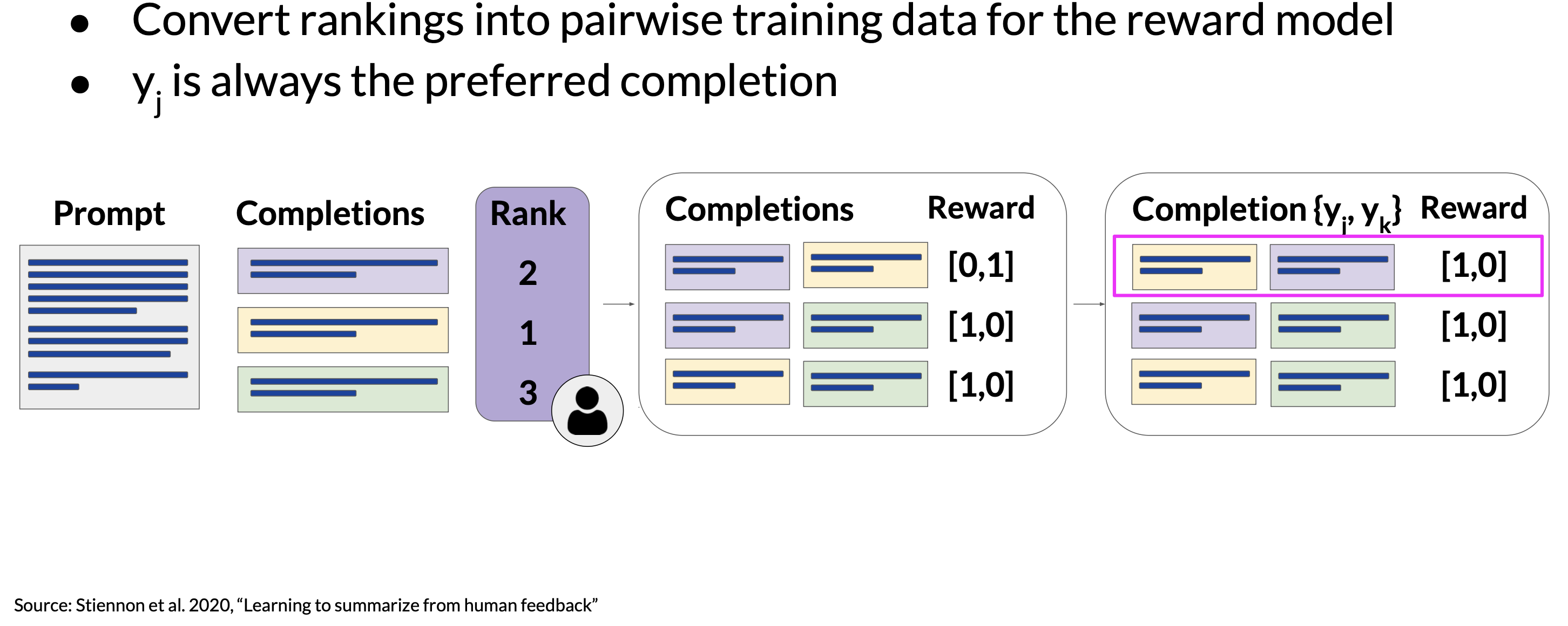

Prepare labeled data for training

Before training the reward model, convert the ranking data into a pairwise comparison of completions. Re-order the pair so the preferred response comes first. This is an important step because the reward model expects the preferred completion \(y_{j}\) first. Once completing this data restructuring, the human responses will be in the correct format for training the reward model.

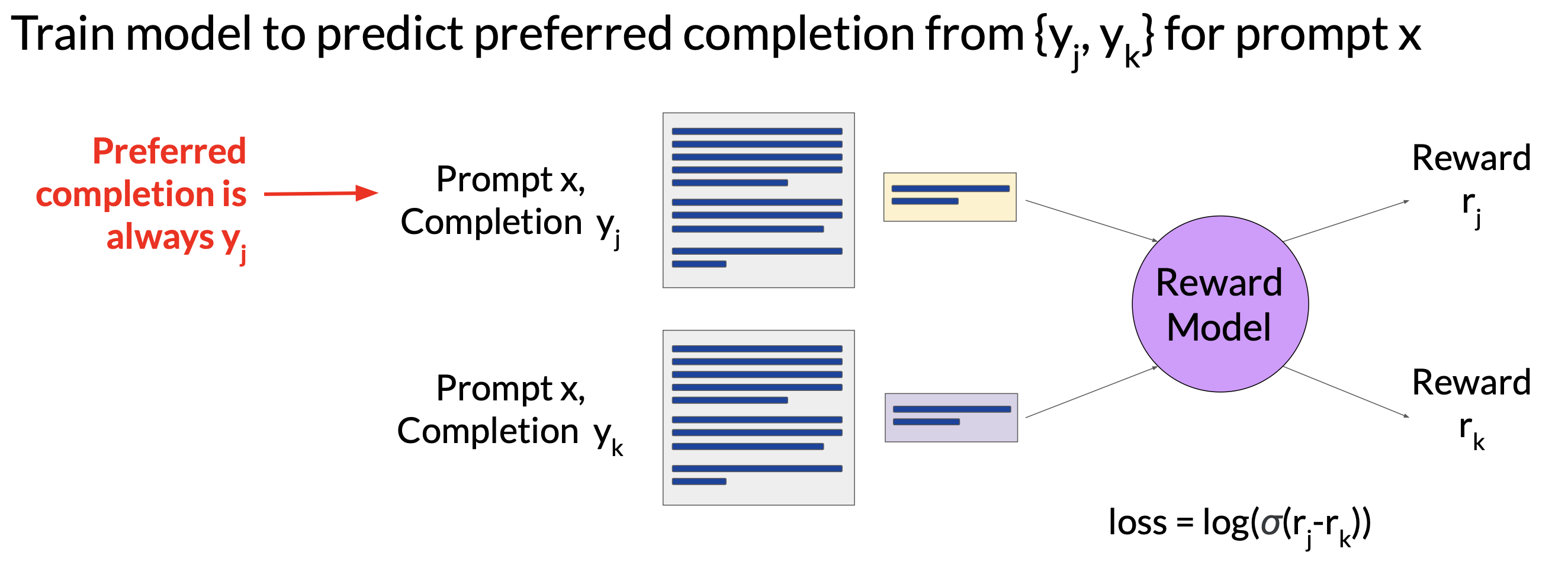

RLHF: reward model

By the time we are done with training the reward model, we won’t need to include any more humans in the loop. Instead, the reward model will effectively take place of the human labeler and automatically choose the preferred completions during the RLHF process.

The reward model is also usually a language model which is trained using the supervised learning on the pairwise comparison that we prepared from the human labelers’ assessment of the prmpts.

The human preferred completion is always the first one, labeled as \(y_{j}\). For a given prompt \(x\), the reward model learns to favor the human-preferred completion \(y_{j}\), while minimizing the log sigmoid of the reward difference, \(r_{j} - r_{k}\).

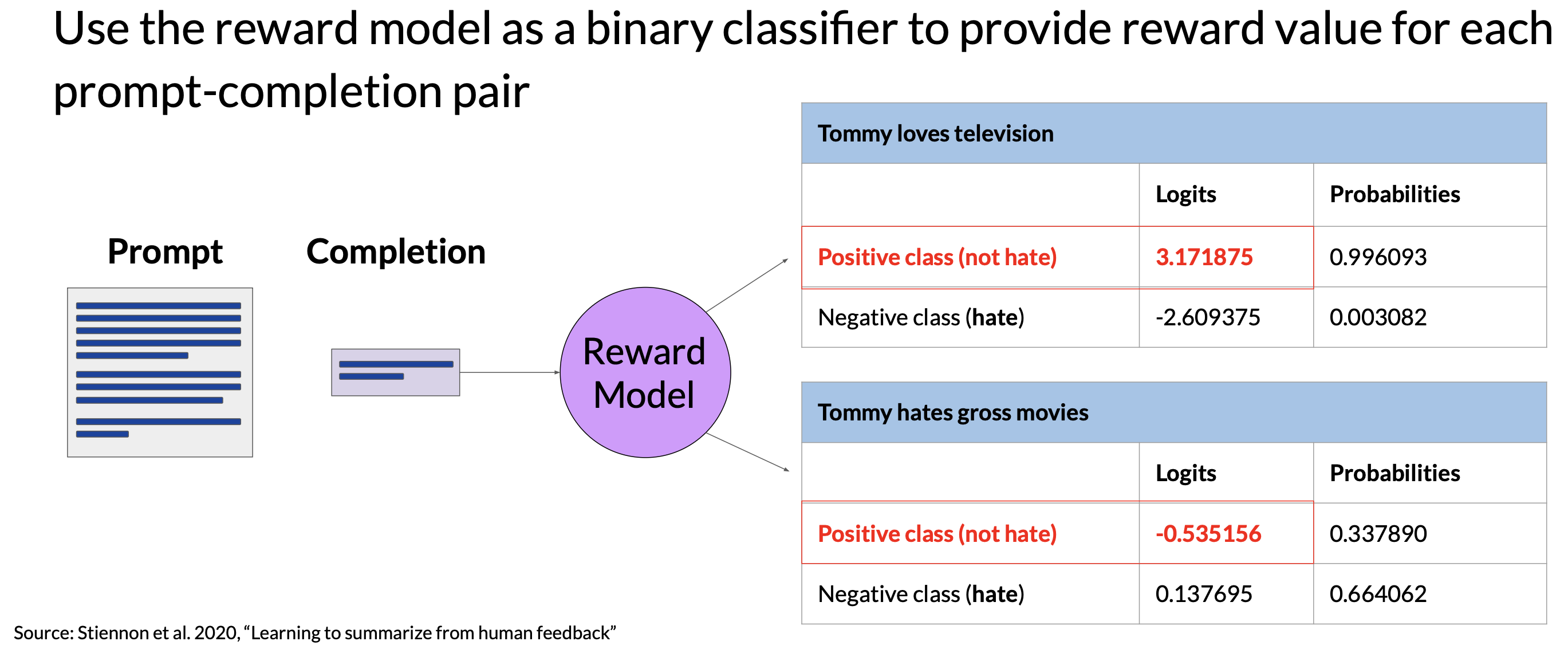

Once the model has been trained on the human ranked prompt-completion pairs, we can use the reward model as a binary classifier to provide a set of logits (i.e. reward values) across the positive and negative classes. The logits are the unnormalized model outputs before applying any activation function. If we apply the softmax function to the logits, we get probabilities. The examples below (Figure 9) show a good and a bad example for the rewards.

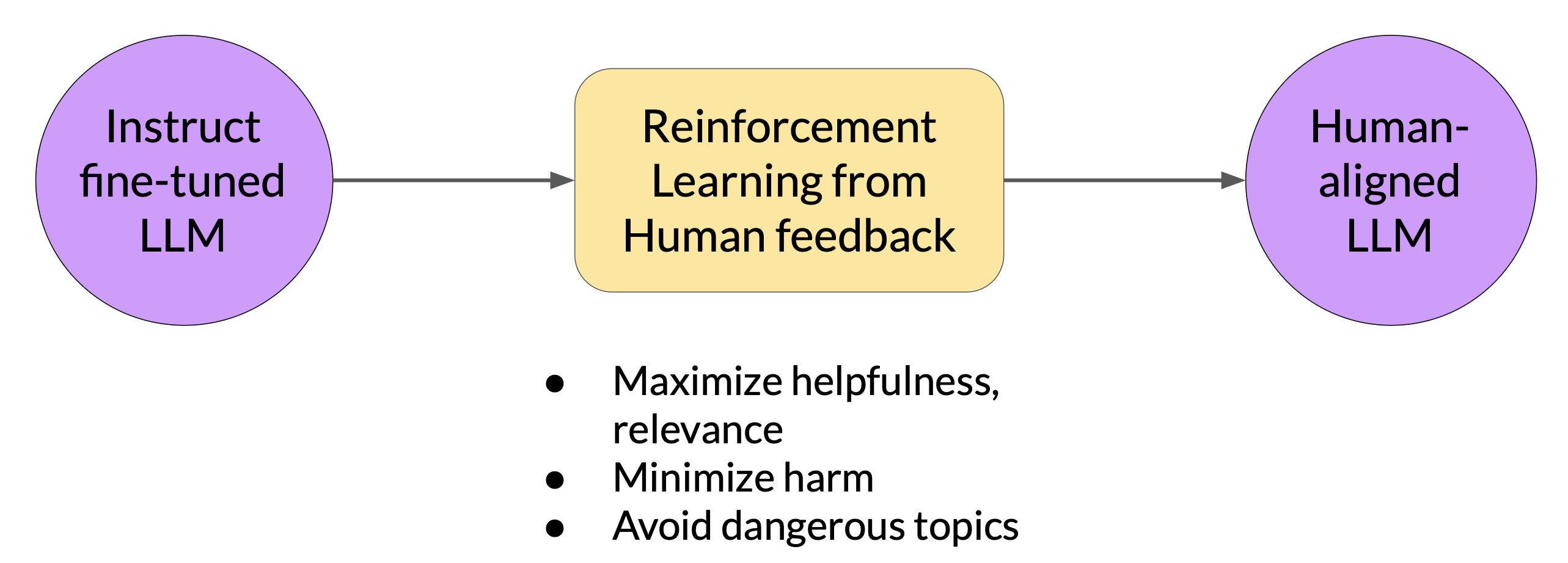

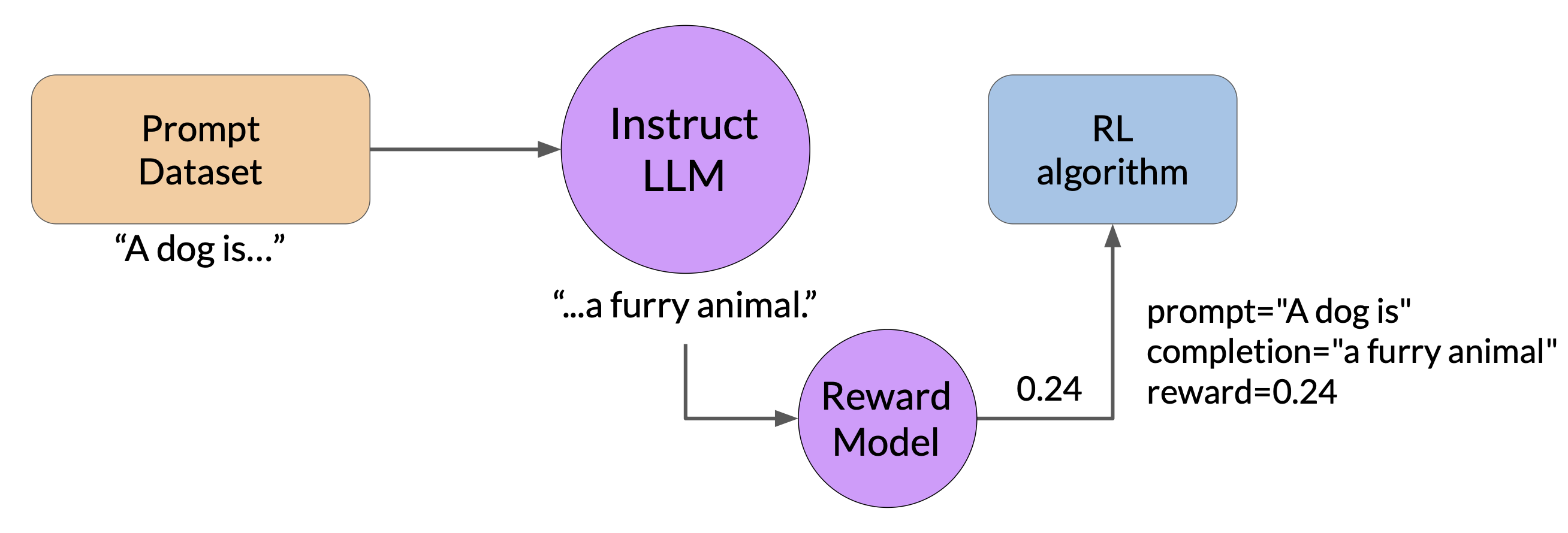

RLHF: fine-tuning

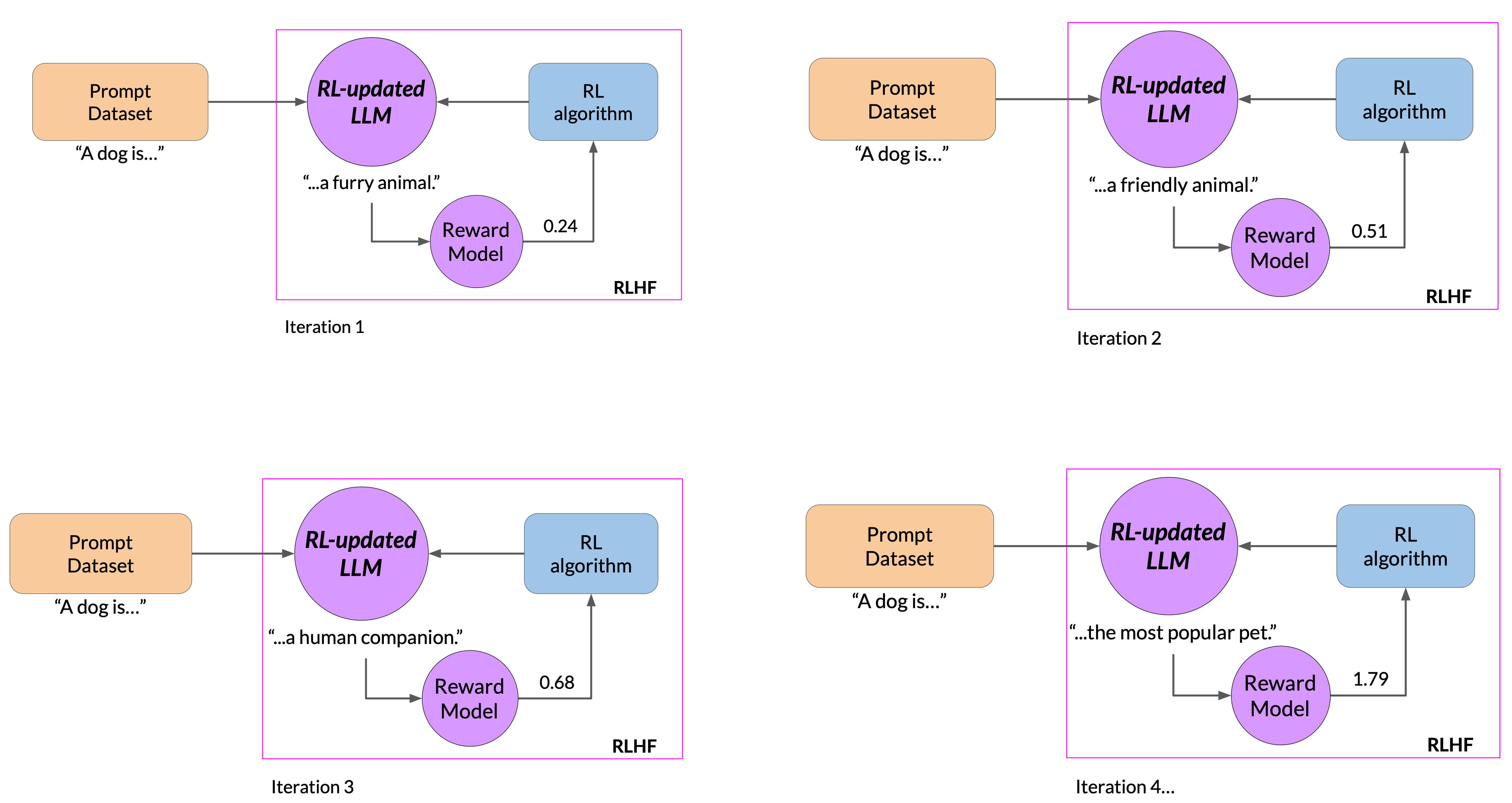

Start with a model that already has good performance on the task of interest, i.e. an instruction fine-tuned LLM. A higher reward value represents a more aligned response, vice versa.

Proceed the fine-tuning with multiple iterations. In the following example (Figure 11), we can see that the completion generated by the RL-updated LLM receives a higher reward score, indicating that the updates to weights have resulted in a more aligned completion. If the process works well, we will see the reward improving after each iteration as the model produces text that is increasingly aligned with human preferences.

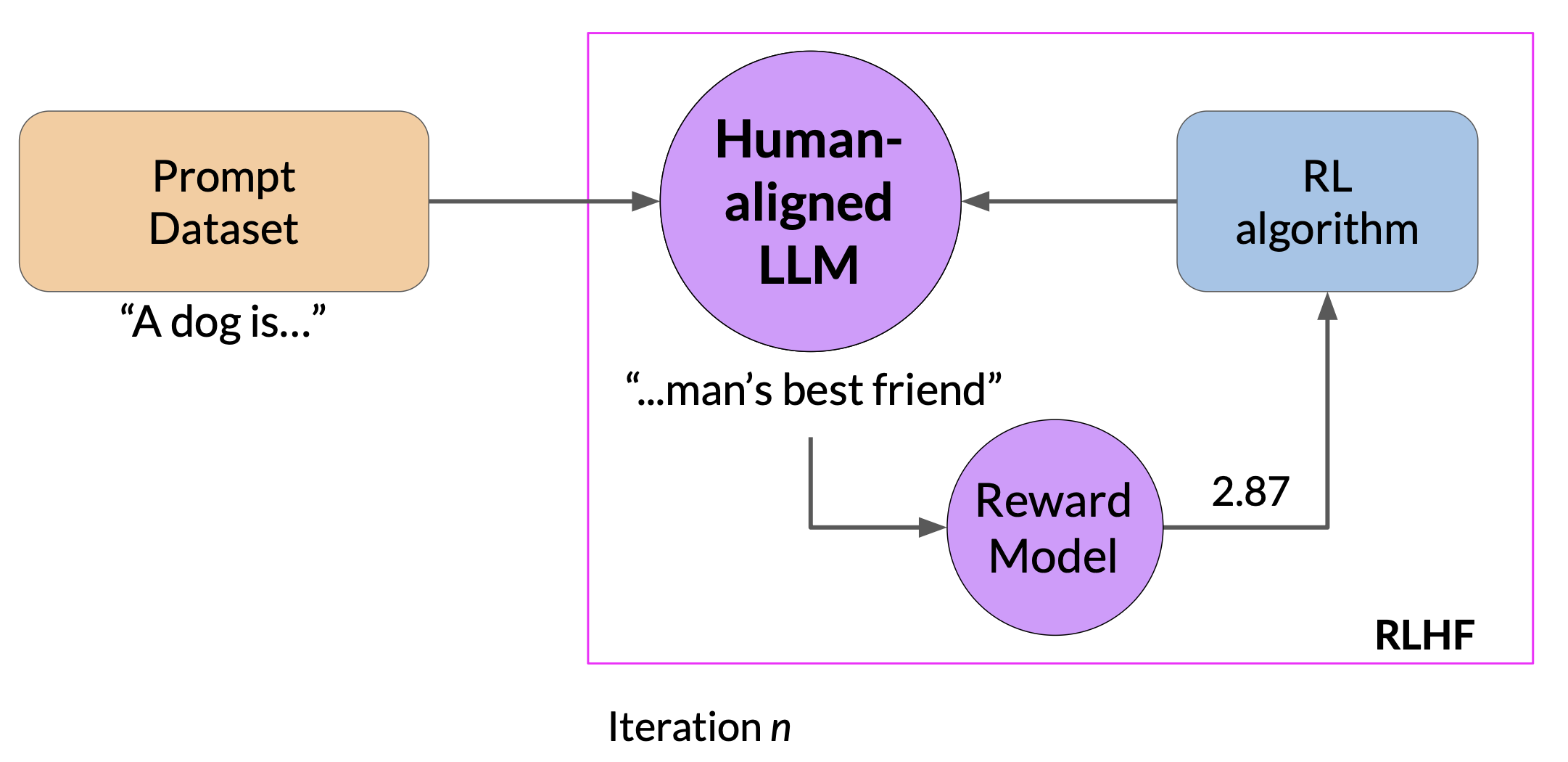

We continue the iterative process until the model is aligned based on some evaluation criteria. For example, reaching a threshold value for the helpfulness we defined. We can also define a maximum number of steps, for example, 20, 000 as the stopping criteria.

At this point, we can refer the fine-tuned model as the human-aligned LLM.

Proximal policy optimization

Proximal policy optimization (PPO) is the most widely used alogirthm for the reinforcement learning step in RLHF pipelines. PPO optimizes a policy, in this case the LLM, to be more aligned with human preferences. So the goal is to update the policy to maximize the reward. Over many iterations, PPO makes updates to the LLM. The updates are small and within a bounded region, resulting in an updated LLM that is close to the previous version. Keeping the changes within the small region results in a more stable learning.

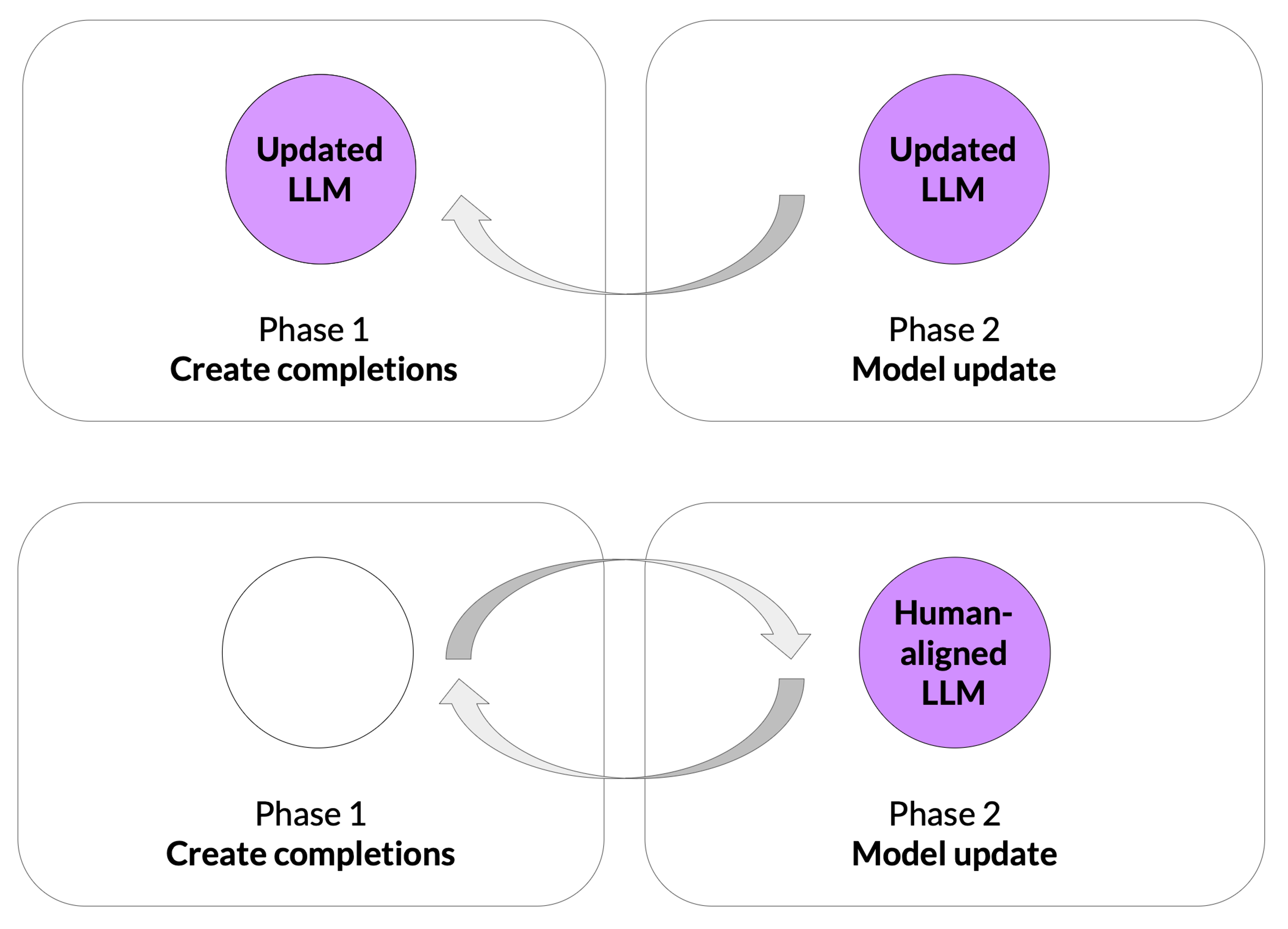

At a high level, each cycle of PPO goes over 2 phases.

PPO Phase 1: Create completions

In phase 1, the LLM is used to carry out a number of experiments to complete the given prompts. These experiments allow us to update the LLM against the reward model in phase 2.

Recall that the reward model captures human preference. For example, the reward can define how helpful, harmless, and honest the responses are. The expected reward of a completion is an important quantity used in the PPO objective. We estimate this quantity through a separate head of the LLM called the value function.

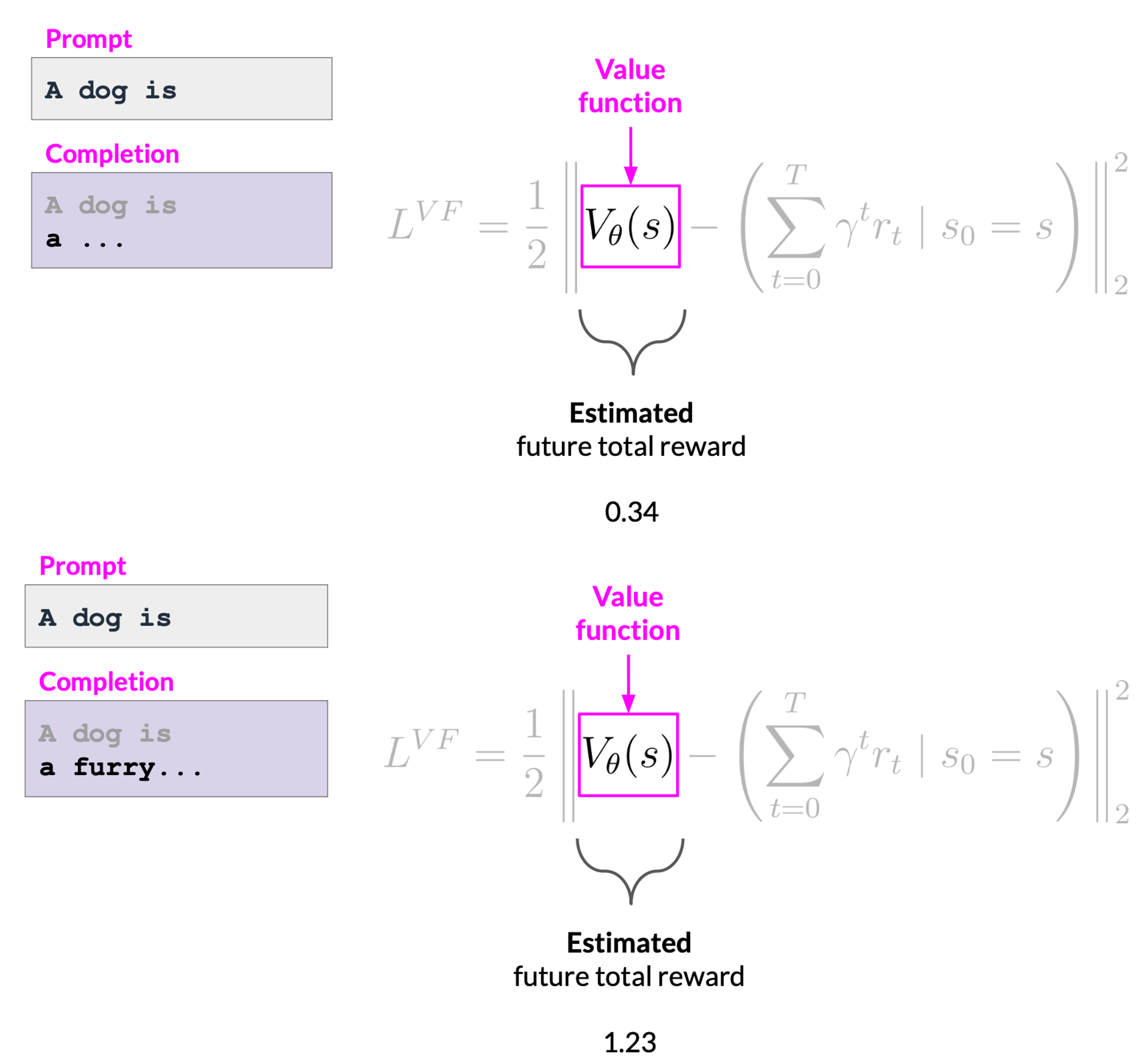

How to calculate value loss:

Assume a number of prompts are given, we first generate LLM response to the prompts, then calculate the reward for the prompt completions using the reward model.

-

The value function estimates the expected total rewards for a given state s. In other words, as the LLM generates each token of a completion, we want to estimate the total future reward based on the current sequence of tokens. Think of this as a baseline to evaluate the quality of completions agains the alignment criteria. In the following example, with the completion of the first token, the total estimated future reward is 0.34; with the next generated token, the estimated future total reward increases to 1.23.

-

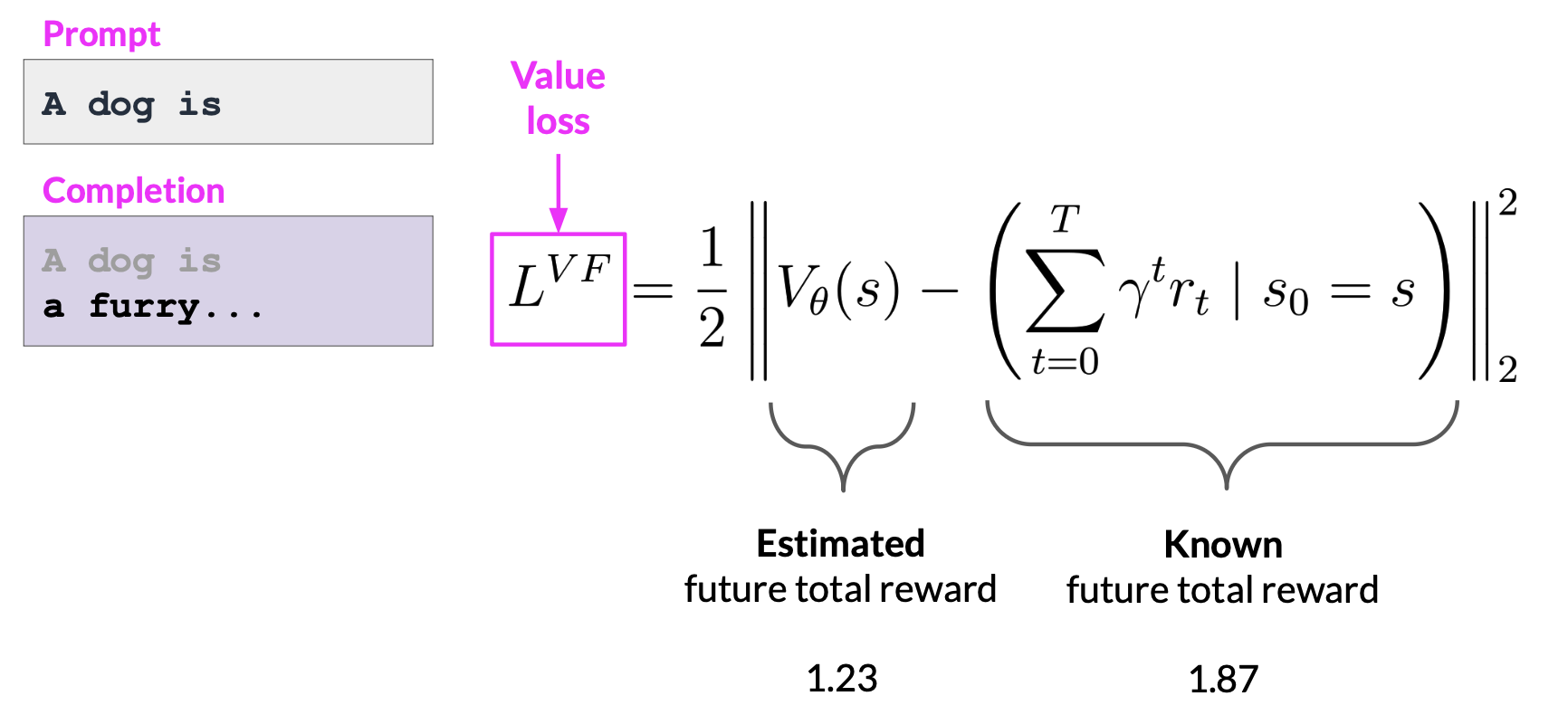

The goal is to minimize the value loss, which is the difference between the actual future total reward and the estimation to the value function.

The value loss makes the estimates for future rewards more accurate. The value function is then used in Advantage Estimation in phase 2.

PPO Phase 2: Model update

In phase 2, we make small updates to the model, and evaluate the impact of those updates on the alignment goal. The model weights updates are guided by the prompt completion, losses, and rewards.

PPO ensures to keep the model updates within a certain small region called the trust region. This is where the proximal aspect of PPO comes into play. Ideally, this series of small updates will move the model towards higher rewards.

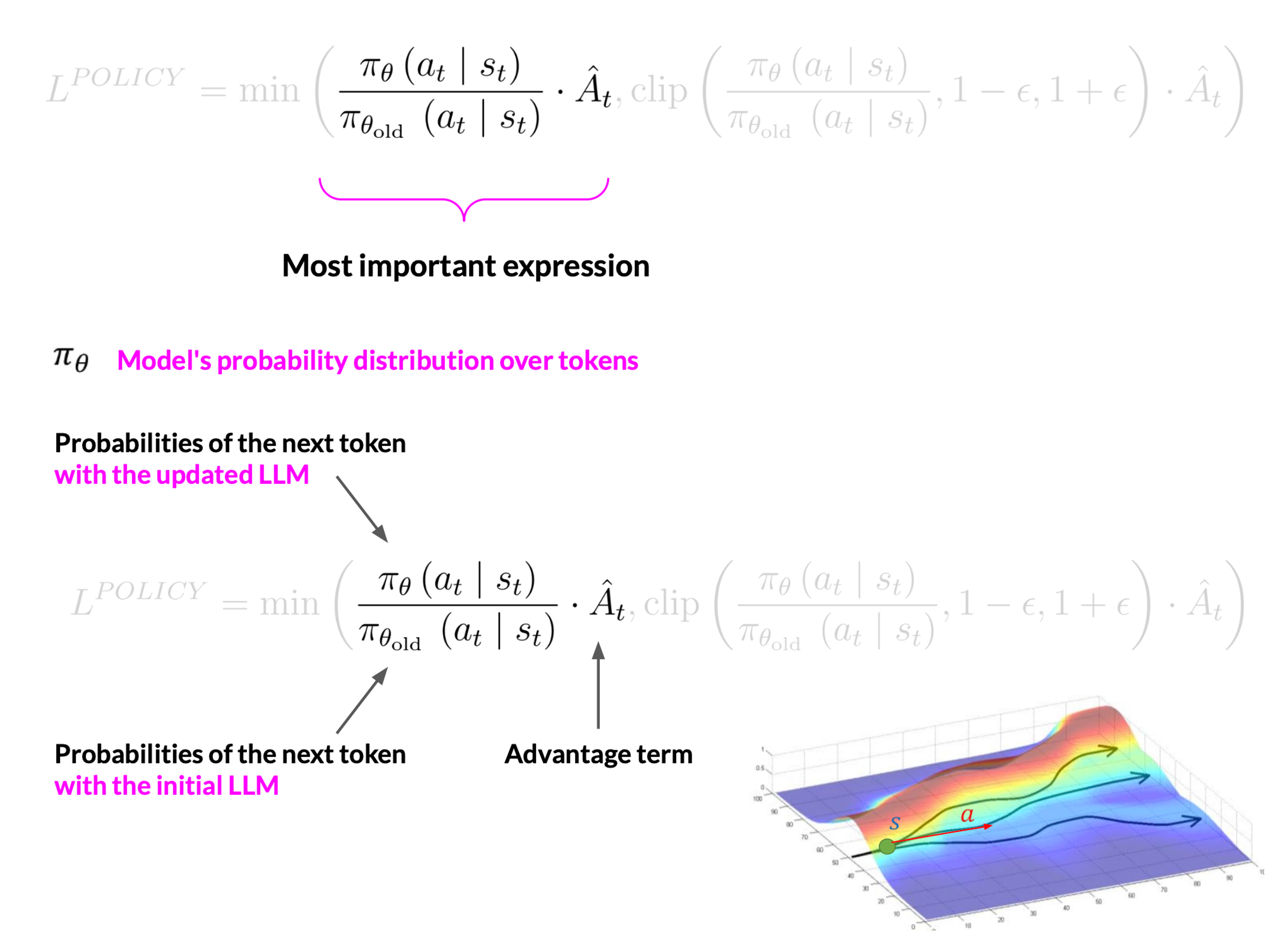

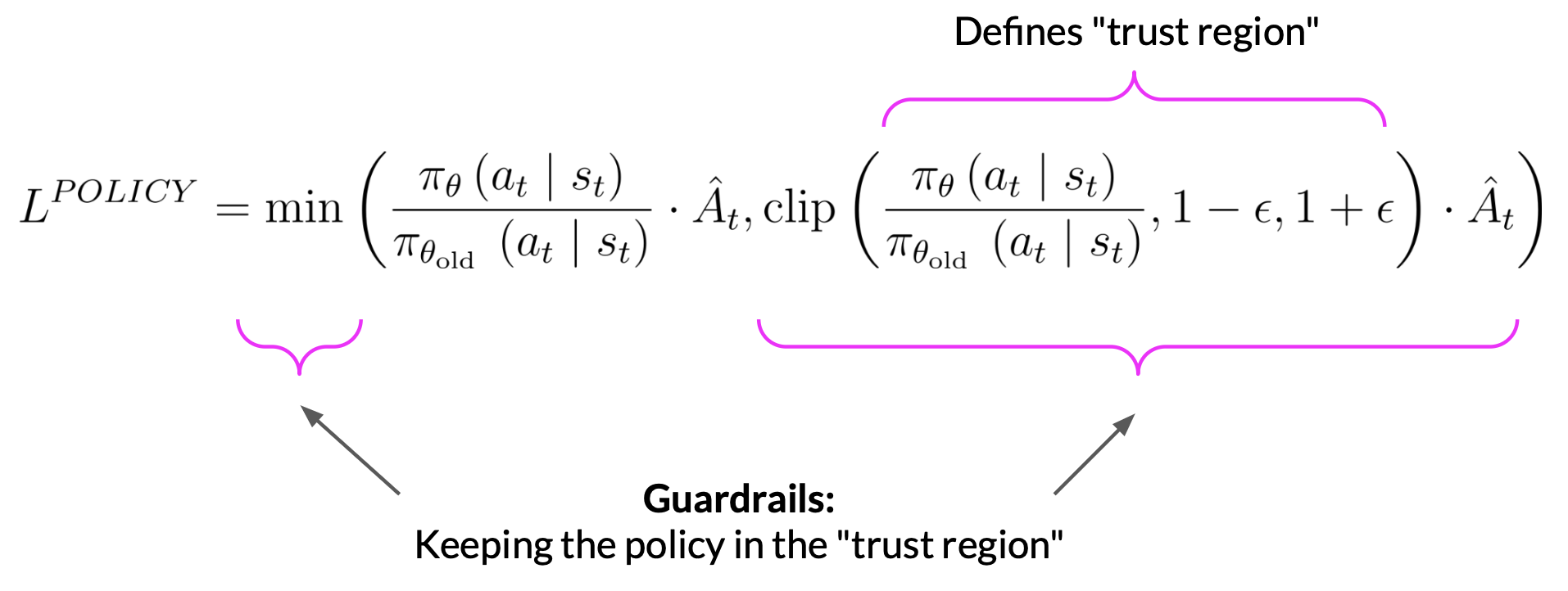

The policy loss is the main objective that the PPO algorithm tries to optimize during training.

Calculate policy loss

- \(a_{t}\) is the next token, \(s_{t}\) is the completed prompt up to the token \(t\).

- The denominator of the first term is the probability of the next token with the initial version of the LLM which is frozen. The numerator is the probability of the next token, through the updated LLM, which we can change for the better reward. \(\hat{A_{t}}\) is called the estimated advantage of a given choice of action. The advantage term estimates how much better or worse the current action is compared to all possible actions at that state. So we look at the expected future rewards of a completion following the new token, and we estimate how advantageous this completion is compared to the rest.

- A positive \(\hat{A_{t}}\) means the suggested token is better than the average. Therefore, increasing the probability of the current token seems like a good strategy that leads to higher rewards. This translates to maximizing the expression we have here. If the suggested token is worse than average, the advantage will be negative. Then maximizing the expression will demote the token, which is the correct strategy.

- So the overall conclusion is that maximizing this expression results in a better aligned LLM.

- Directly maximizing the expression would lead to problems because our calculation is reliable under the assumption that our advantage estimations are valid. The advantage estimations are valid only when the old and new policies are close to each other. This is where the rest of the terms come into play.

- The second term defines a region where the two policies are near each other. These extra terms are guardrails, and simply define a region in proximity to the LLM, where our estimates have small errors. This is called the trust region. These extra terms ensure that we are unlikely to leave the trust region.

- In summary, optimizing the PPO policy objective results in a better LLM without overshooting to unreliable regions.

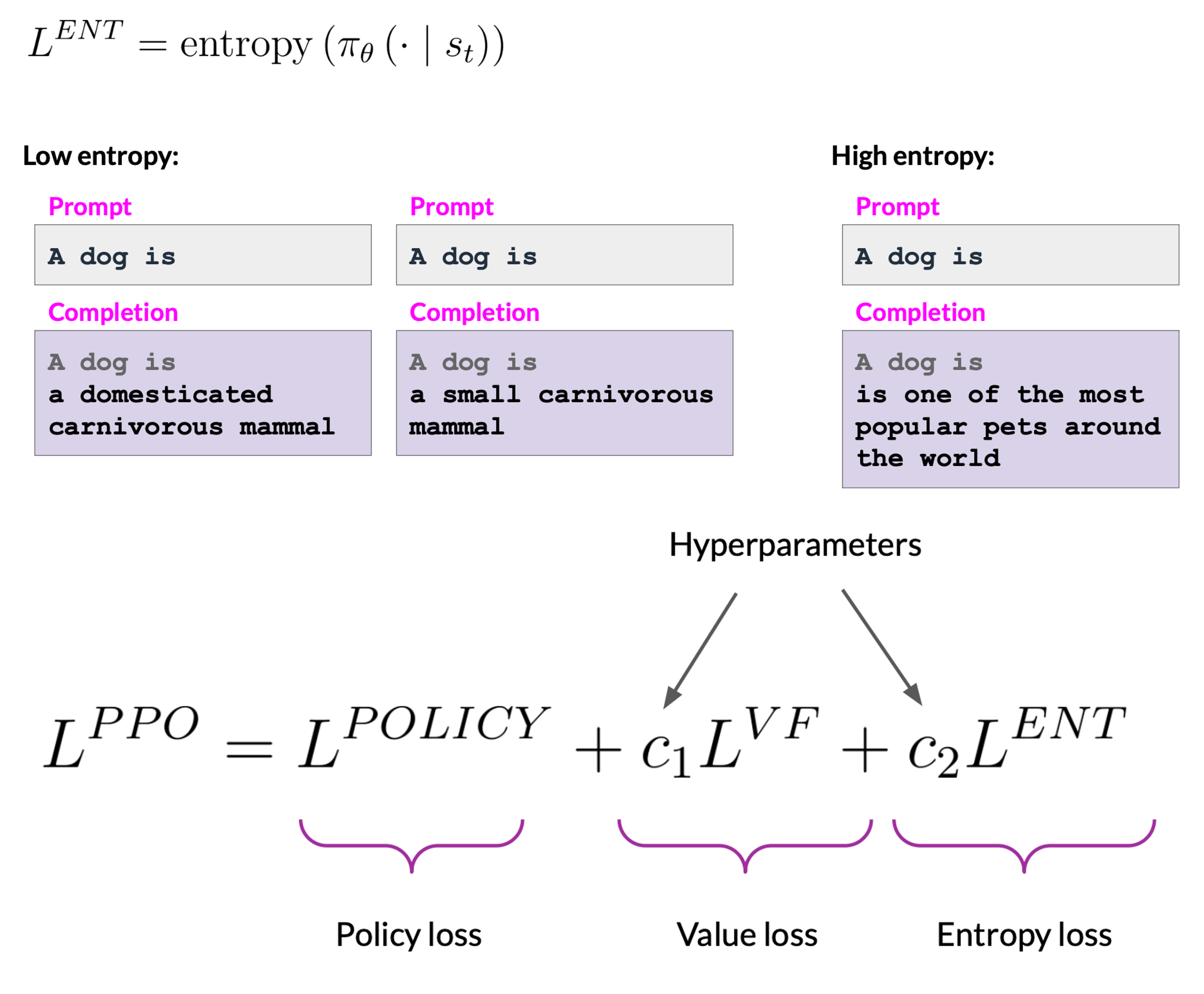

Calculate entropy loss

- While the policy loss moves the model toward the alignment goal, entropy loss allows the model to maintain creativity. If we keep entropy low, we may end up always completing the prompt in the same way. This is similar to the temperature setting. The difference is that temperature influences model creativity during the inference time, while the entropy influences the model creativity during training.

Once the model weights are updated, PPO starts a new cycle. For the next iteration, the LLM is replaced with the updated LLM, and a new PPO cycle starts. After many iterations, we arrive at the human-aligned LLM.

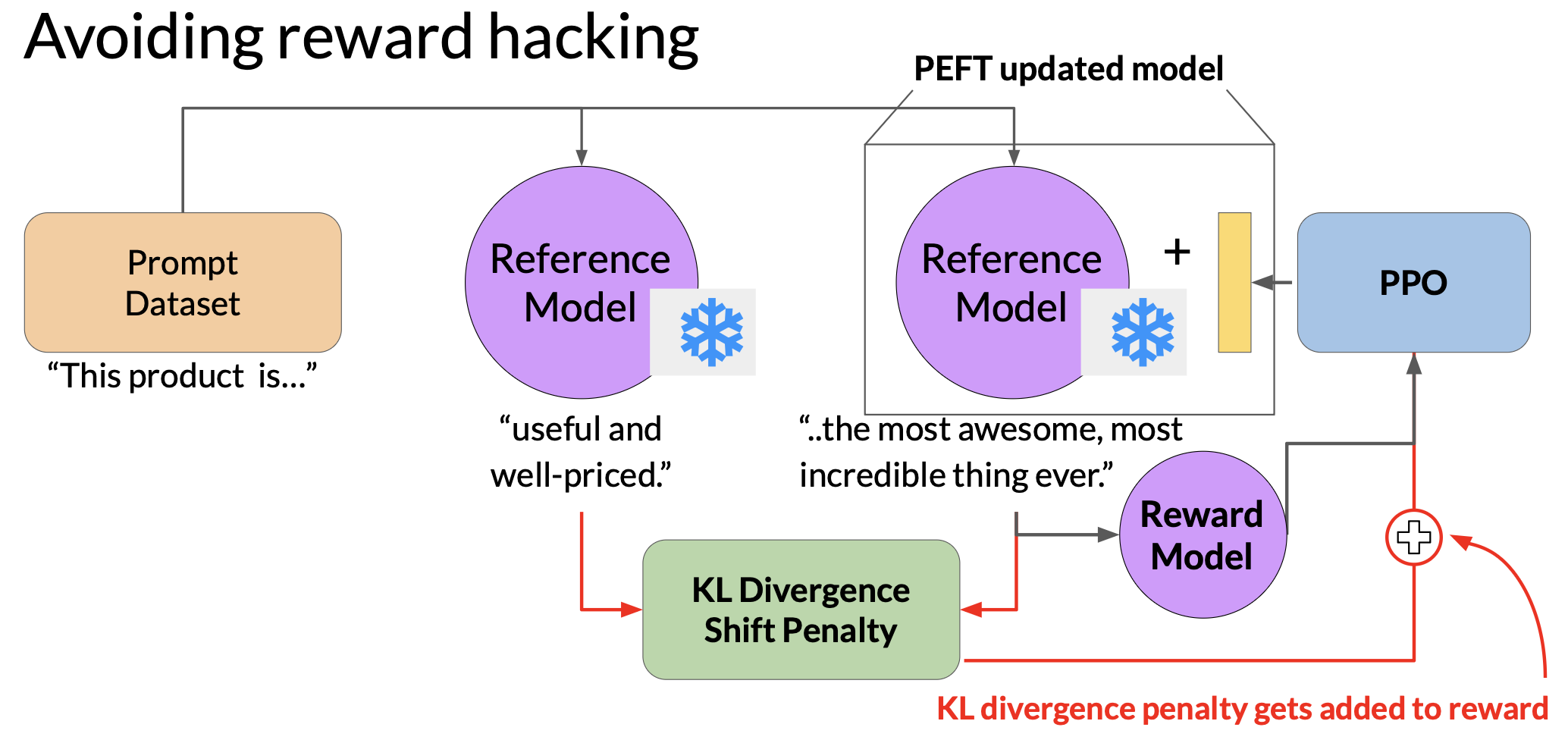

RLHF: reward hacking

As the policy tries to maximize the reward, it can diverge too much from the original language model.

To avoid reward hacking, we can use the instruct model as a reference model. The weights of the reference model are frozen, and are not updated during iterations of RLHF. This way, we always maintain a single reference model to compare to.

During training, each prompt is passed to both models, generating a completion by the reference LLM and the intermediate LLM updated model. At this point, we can compare the two completions and calculate a value called the KL divergence. KL divergence is a statistical measure of how different two probability distributions are. We can use it to compare the completions of the two models and determine how much the updated model has diverged from the reference model.

KL divergence is calculated for each generated token across the whole vocabulary of the LLM. This can easily be tens or hundreds or thousands of tokens. Although softmax gives a probability for every token, in practice, most of the probability mass is concentrated on a small number of likely tokens, making the distribution effectively sparse. This is still a relatively expensive process. So we will always benefit from using GPUs.

Once we calculate the KL divergence between the two models, we add it as a term to the reward calculation. This will penalize the RL updated model if it shifts too far from the reference LLM and generates completions that are too different.

Note that we need two full copies of the LLM to calculate KL divergence, the frozen reference LLM, and the RL updated PPO LLM. We can benefit from combining RLHF with PEFT. In this case, we only update the weights of a PEFT adapter, not the full weights of the LLM. This means we can reuse the same underlying LLM for both the reference model and the PPO model, which we update with trained PEFT parameters. This reduces the memory footprint during training by approximately half.

Scaling human feedback

Constitutional AI is a method for training models using a set of rules and principles that govern the model’s behavior. We train the model to self-critique and revise its response to comply with those principles.

Constitutional AI is useful not only for scaling feedback, it can also help to address some unintended consequences of RLHF. For example, depending on how the prompt is structured, an aligned model may end up revealing harmful information as it tries to provide the most helpful response it can. Providing the model with a set of constitutional principles can help the model balance these competing interests and minimize the harm.

References

- Training language models to follow instructions with human feedback

- Paper by OpenAI introducing a human-in-the-loop process to create a model that is better at following instructions (InstructGPT).

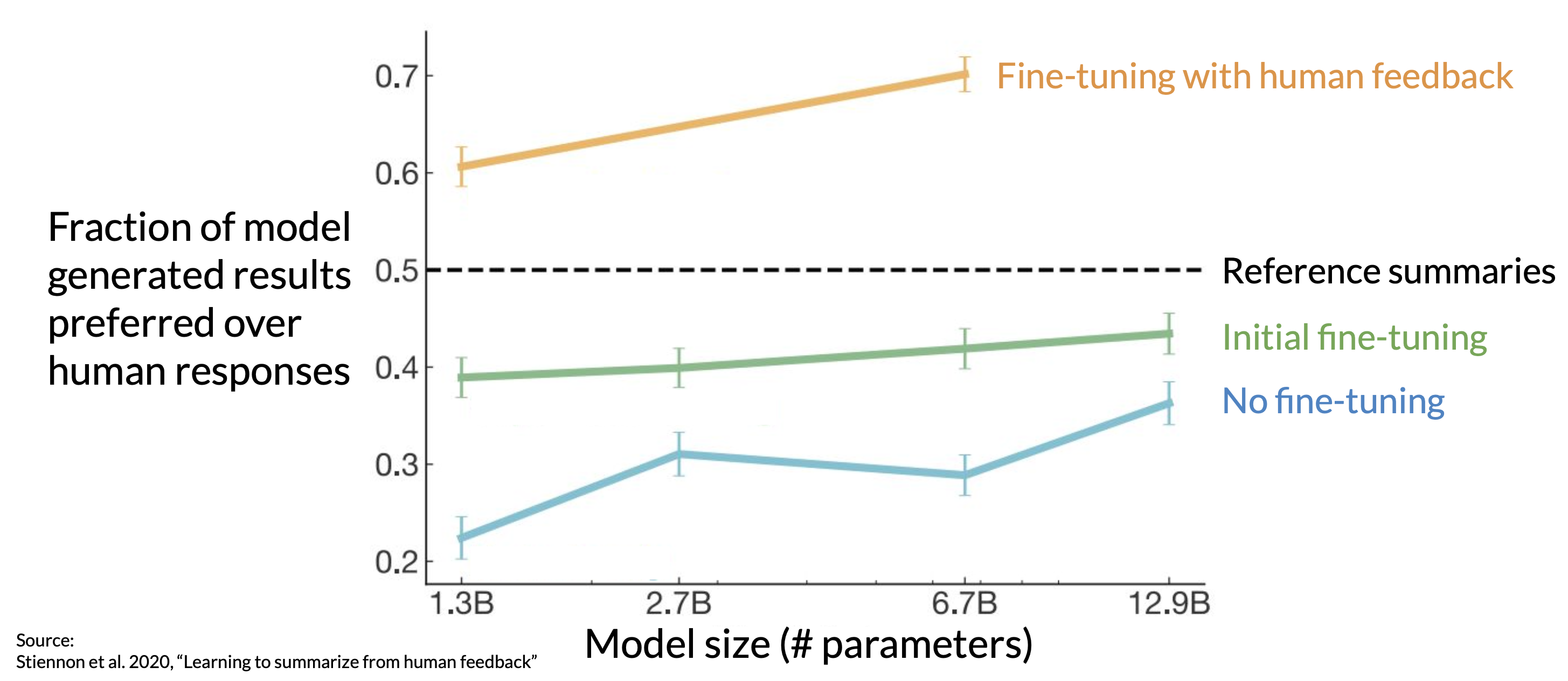

- Learning to summarize from human feedback

- This paper presents a method for improving language model-generated summaries using a reward-based approach, surpassing human reference summaries.

- Proximal Policy Optimization Algorithms

- The paper from researchers at OpenAI that first proposed the PPO algorithm. The paper discusses the performance of the algorithm on a number of benchmark tasks including robotic locomotion and game play.

- Direct Preference Optimization: Your Language Model is Secretly a Reward Model

- This paper presents a simpler and effective method for precise control of large-scale unsupervised language models by aligning them with human preferences.

- Constitutional AI: Harmlessness from AI Feedback

- This paper introduces a method for training a harmless AI assistant without human labels, allowing better control of AI behavior with minimal human input.